Why can’t I just use a matrix to solve ARMA?

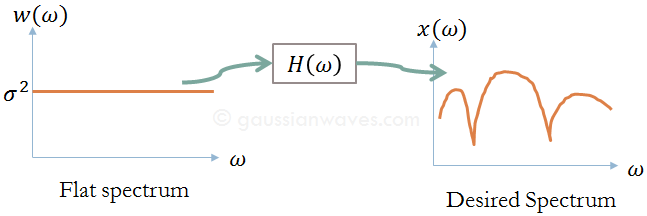

Key focus: “Why can’t I just use a matrix to solve ARMA?” The answer is right there in the shape of the surface—you can’t solve a “warped” landscape with a linear equation. Introduction In signal modeling, our goal is to find a set of coefficients (ak and bk) that best describe an observed signal. We … Read more