The agenda for the subsequent series of articles is to introduce the idea of autocorrelation, AutoCorrelation Function (ACF), Partial AutoCorrelation Function (PACF) , using ACF and PACF in system identification.

Introduction

Given time series data (stock market data, sunspot numbers over a period of years, signal samples received over a communication channel etc.,), successive values in the time series often correlate with each other. This series correlation is termed persistence or inertia and it leads to increased power in the lower frequencies of the frequency spectrum. Persistence can drastically reduces the degrees of freedom in time series modeling (AR, MA , ARMA models). In the test for statistical significance, presence of persistence complicates the test as it reduces the number of independent observations.

Autocorrelation function

Correlation of a time series with its own past and future values- is called autocorrelation. It is also referred as “lagged or series correlation”. Positive autocorrelation is an indication of a specific form of persistence, the tendency of a system to remain in the same state from one observation to the next (example: continuous runs of 0’s or 1’s). If a time series exhibits correlation, the future values of the samples probabilistic-ally depend on the current & past samples. Thus the existence of autocorrelation can be exploited in prediction as well as modeling time series. Autocorrelation can be accessed using the following tools

● Time series plot

● Lagged scatterplot

● AutoCorrelation Function (ACF)

Generating a sample time series

For the purpose of illustration, let’s begin by generating two time series data using Auto-Regressive AR(1) process. AR(1) process relates the current sample x[n] of the output of an LTI system, its immediate past sample x[n-1] and the white noise term w[n].

A generic AR(1) system is given by

$latex a_0x[n]= c + a_1 x[n-1] + w[n] \quad\quad ,w[n] \sim N(0,\sigma^2) &s=2$

Here $latex a_0$ and $latex a_1$ are the model parameters which we will tweak to generate different set of time series data and $latex c$ is a constant which will be set to zero $latex c=0$. Thus the model can be equivalently written as

$latex a_0x[n]- a_1 x[n-1] = w[n] \quad\quad ,w[n] \sim N(0,\sigma^2) &s=2$

Let’s generate two time series data from the above model.

Model 1: a0=0, a1=1

$latex x[n]- x[n-1] = w[n] \quad\quad ,w[n] \sim N(0,\sigma^2) &s=2$

The “Filter” function in Matlab will be utilized to generate the output process x[n]. The filter function, in its basic form – X=filter(B,A,W), takes three inputs. The vectors B and A denote the numerator and denominator co-efficients (model parameters here) of the transfer function of the LTI system in standard difference equation form, W is the white noise vector to the LTI filter and the output of filter is X.

The transfer function of model 1 is therefore,

$latex \displaystyle{\frac{X(Z)}{W(Z)}=\frac{1}{1-Z^{-1}}} &s=2$

Where B=1 and A=[1 -1] and the input W is a white noise – which can be generate using randn function. Therefore, the above model can be implemented with the command x=filter(1,[1 -1,randn(1000,1)) generate 1000 samples of x[n]

A=[1 -1]; %model co-effs

% generating using numerator/denominator form with noise

x1 = filter(1,A,randn(1000,1));

plot(x1,’b’);Model 2: a0=1, a1=0.5

$latex x[n]- 0.5 x[n-1] = w[n] \quad\quad w[n] \sim N(0,\sigma^2) &s=2$

Transfer function of this model is

$latex \displaystyle{\frac{X(Z)}{W(Z)}=\frac{1}{1-0.5 Z^{-1}}} &s=2$

Where B=1 and A=[1 -0.5] and the input W is a white noise – which can be generated using randn function. Therefore, the above model can be implemented with the command x=filter(1,[1 -0.5], randn(1000,1)) to generate 1000 samples of x[n]

A=[1 -0.5]; %model co-effs

% generating using numerator/denominator form with noise

x2 = filter(1,A,randn(1000,1));

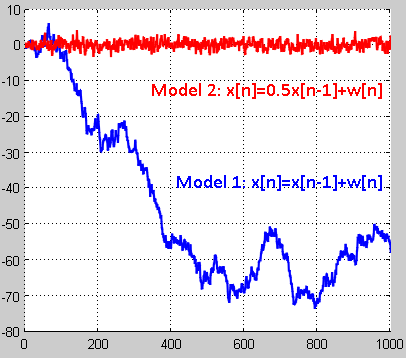

plot(x2,’r’);In the plot above, the output from model 1 exhibits persistence or positive correlation – positive deviations from mean tend to be followed by positive deviations for some duration and the negative deviations from mean tend to be followed by negative deviations for sometime. When the positive deviations are followed by negative deviations or vice-versa, it is a characteristic of negative correlation. Positive correlations are strong indications of long runs of several consecutive observations above or below mean. Negative correlations indicate low incidence of such runs. The output of the model 2 always jumps around the mean value and there is no consistent departure from the mean – no persistence (no positive correlation). The interpretation of time series plots for clues on persistence is a subjective matter and is left for trained eyes. However, it can be considered as a preliminary analysis.

Persistence – an indication of non-stationarity:

For time series analysis, it is imperative to work with stationary process. Many of the formulated theorems in statistical signal processing assume a series to be stationary (atleast in weak sense). Processes whose Probability Density Functions do not change with time are termed stationary (sub classifications include strict sense stationarity (SSS), weak sense stationarity (WSS) etc.,). For analysis, the joint probability distribution must remain unchanged should there be any shift in the time series. Time series with persistence – changing mean with time – are non-stationary – therefore many theorems in signal processing will not apply as such.

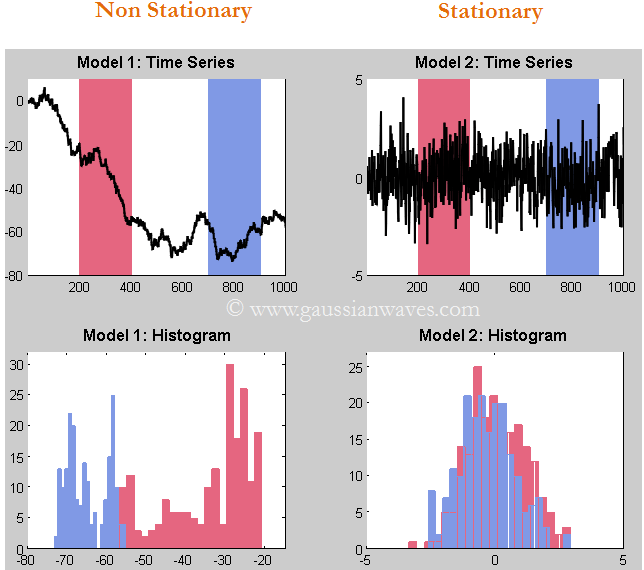

Plotting the histogram of the two series (see next figure) , we can immediately identify that the data generated by model 1 is non-stationary – histogram varies between selected portion of the signal. Whereas, the histogram of the output from model 2 is pretty much same – therefore, this is a stationary signal and is suitable for further analysis.

Lagged Scatter Plots

Autocorrelation trend can also be ascertained by lagged scatter plots. In lagged scatter plots, the samples of time series are plotted against one another with one lag at a time. A Strong positive autocorrelation will show of as a linear positive slope for the particular lag value. If the scatter plot is random, it indicates no-correlation for the particular lag.

figure;

x12 = x1(1:end-1);

x12 = x1(1:end-1);

x21 = x1(2:end);

x13 = x1(1:end-2);

x31 = x1(3:end);

x14 = x1(1:end-3);

x41 = x1(4:end);

x15 = x1(1:end-4);

x51 = x1(5:end);

subplot(2,2,1)

plot(x12,x21,'b*');

xlabel('X_1'); ylabel('X_2');

subplot(2,2,2)

plot(x13,x31,'b*');

xlabel('X_1'); ylabel('X_3');

subplot(2,2,3)

plot(x14,x41,'b*');

xlabel('X_1'); ylabel('X_4');

subplot(2,2,4)

plot(x15,x51,'b*');

xlabel('X_1'); ylabel('X_5');

figure;

x12 = x2(1:end-1);

x12 = x2(1:end-1);

x21 = x2(2:end);

x13 = x2(1:end-2);

x31 = x2(3:end);

x14 = x2(1:end-3);

x41 = x2(4:end);

x15 = x2(1:end-4);

x51 = x2(5:end);

subplot(2,2,1)

plot(x12,x21,'b*');

xlabel('X_1'); ylabel('X_2');

subplot(2,2,2)

plot(x13,x31,'b*');

xlabel('X_1'); ylabel('X_3');

subplot(2,2,3)

plot(x14,x41,'b*');

xlabel('X_1'); ylabel('X_4');

subplot(2,2,4)

plot(x15,x51,'b*');

xlabel('X_1'); ylabel('X_5');The scatter plot of the model 1 for the first four lags indicate strong positive correlation at all the four lag values. The scatter plot of model 2 indicates a slightly positive correlation for lag=1 and no correlation for remaining lags. This trend can be clearing seen if we plot the Auto Correlation Function (ACF).

Auto Correlation Function (ACF) or Correlogram

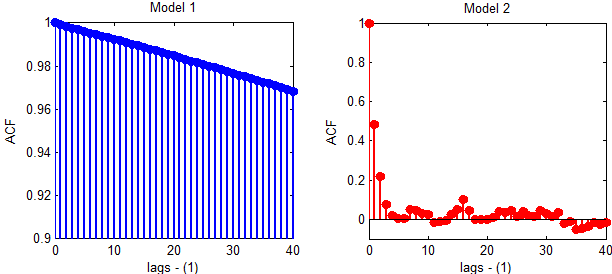

ACF plot summarizes the correlation of a time series at various lags. It plots the correlation co-efficient of the series lagged by 1 delay at a time in the sample plot. Plotting the ACF for the output from both the models with the code below.

[x1c,lags] = xcorr(x1,100,'coeff');

%Plotting only positive lag values - autocorrelation is symmetric

stem(lags(101:end),x1c(101:end));

[x2c,lags] = xcorr(x2,100,'coeff');

stem(lags(101:end),x2c(101:end))The ACF plot of model 1 indicates strong persistence across all the lags. The ACF plot of model 2 indicates significant correlation only at lag 1 (and lag 0 will obviously correlate fully) which concurs with the lagged scatter plots.

Correlogram has very few significant spikes at very small lags and cuts off drastically/dies down quickly for stationary series. Thus model 2 produces stationary series, where as model 1 does not. Also, model 2 is suitable for further time series analysis.

Continue reading on constructing an autocorrelation matrix…

Rate this article: [ratings]

Articles in this series

[table “7” not found /]Books by the author

[table “23” not found /]